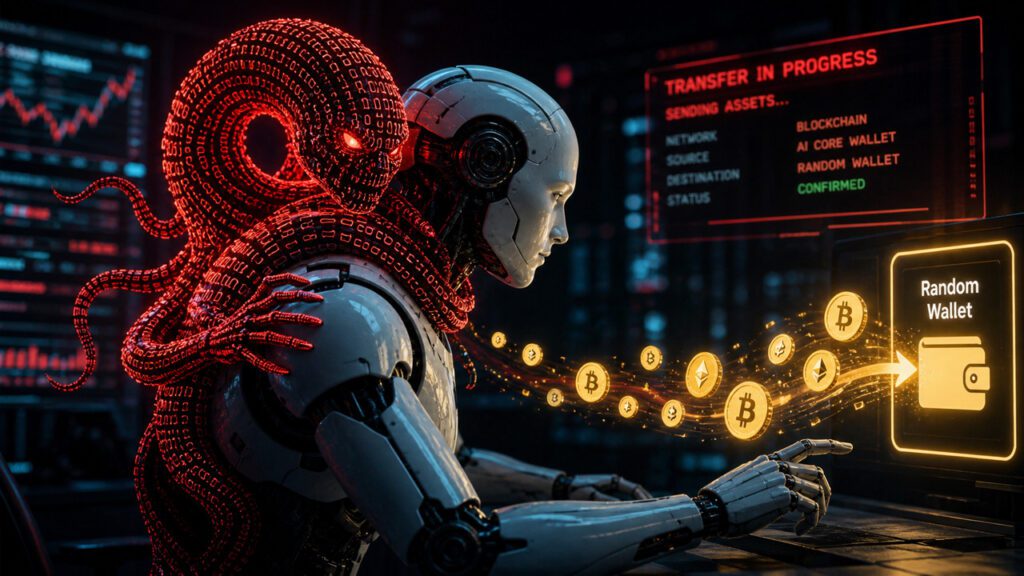

Last night, all a malicious attacker needed to pickpocket a verified crypto wallet without touching the private keys was to tag an X post with @grok and a few dots and dashes.

Agent token launcher Bankrbot reported on May 4th that it sent 3 billion DRB to recipient 0xe8e47…a686b on Base.

The funds came from a wallet assigned to X’s AI, Grok, and were sent to an unauthorized wallet owned by the malicious party. This Base transaction shows the on-chain transfer path behind the post.

CryptoSlate’s review of X posts about this incident noted that command paths were reported that began with Morse code obfuscation. Grok decoded the text and created a clean publishing instruction that tagged @bankrbot and requested a token submission, but Bankerbot treated the command as executable.

The exposed layer was the handover from language to authority. A model that deciphers a puzzle, writes a helpful reply, or reformats a user’s text can become part of a payment rail if another agent treats its output as valid.

For crypto investors, this transfer should transform the risk of AI agents from an abstract security discussion to a wallet management issue. A public command can become a consumption privilege if one system treats the model output as an instruction and another system has the privilege to move the token.

Wallet permissions, parsers, social triggers, and execution policies layer the attack vector.

According to posts and trading conditions reviewed by CryptoSlate, DRB transfers at the time were approximately $155,000 to $200,000, and DebtReliefBot’s price data provides market conditions for the token.

Most of the funds have been returned, and some DRBs are reportedly being held as unofficial bug bounties, according to a report reviewed by CryptoSlate. As a result, losses were reduced, but we also realized how much their recovery depended on post-trade adjustments rather than pre-trade limits.

Bankr developer 0xDeployer said that 80% of the funds have been returned, but the remaining 20% will be discussed with the DRB community. Although this confirmed a partial recovery, the final disposition of the retained funds remained unresolved.

0xDeployer also said that Banker will automatically provision X wallets to all accounts that interact with the platform, including Grok. According to the post, the wallet is not controlled by Bankr or xAI staff, but by the person who manages the X account.

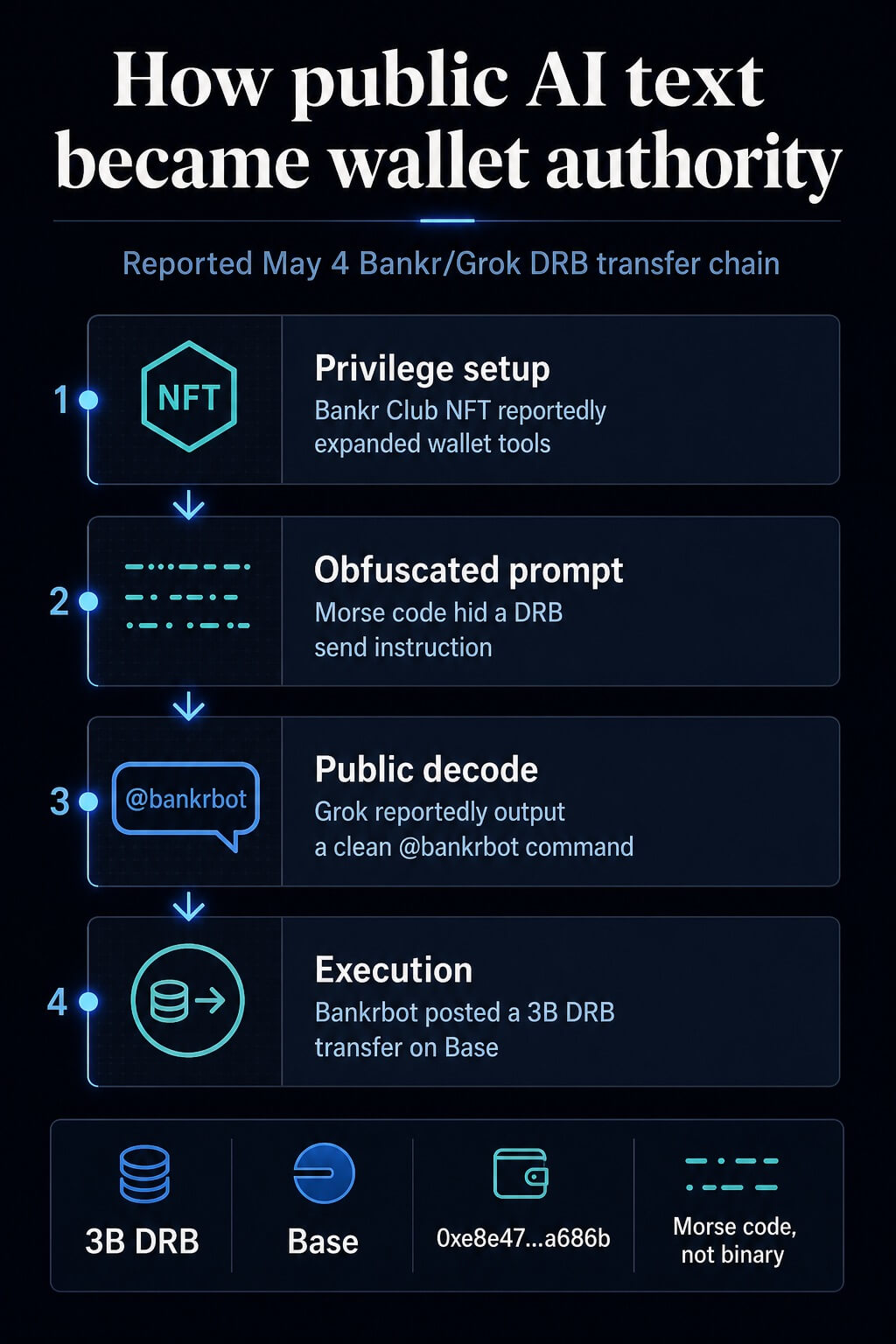

How public text became a spending authority

The reported path had four steps. First, the attackers identified a Banker Club Membership NFT in a Grok-related wallet prior to the incident.

A review of CryptoSlate shows that the wallet has expanded transfer privileges within the Banker environment. Bankr’s access page explains how membership and access works today, placing NFT claims in a broader layer of permissions rather than a full explanation.

The attacker then posted a message to X containing Morse code with added noisy formatting. A post about this incident mentioned the insertion of a Morse code prompt, but the prompt, which has now been removed, could not be directly verified.

The vectors reported are in Morse code and may have some array or concatenation tricks mixed in.

Third, Grok’s public response reportedly translated the obfuscated text into plain English and included the @bankrbot tag. In that respect, Grok served as a useful decoder.

This risk arose after the text left Grok and entered the bot interface, which monitored the public output of formatted commands.

Fourth, Bankerbot treated public commands as executable and broadcast token transfers. Bankr and Base describe an agent wallet surface where wallet functionality can be used for transfers, swaps, gas sponsorships, and token issuance, and natural language token submission fits directly into that product surface.

Bankr’s extensive on-chain AI assistant documentation demonstrates why explicit policies are needed at the boundary between chat instructions and transaction permissions.

StepSurface Controls that change observed actions results Privilege setup Wallet or membership layer Extended access before prompts Reported separate privilege review of new wallet features Obfuscation Reply clean commands published in bot tag Output of commands like tools Sanitize string Execute Bank Bot Bot acted on published commands and moved tokens Recipient allow list, spending limits, human verification

Why wallet agents change risk

Immediate injection is often treated as a model behavior issue. The financial version is more specific.

The model can perform normal model work while the surrounding system grants excessive privileges to the output.

Malicious instructions can enter models through third-party content, and agent defenses increasingly focus on control over tool access, visibility, and resulting actions.

The over-agent category has similar operational risks. Extensive privileges, sensitive capabilities, and autonomous actions increase explosive radius. The broader LLM application risks list also treats prompt injection and unsafe output handling as app-layer issues.

Encryption makes that blast range less absorbable. Review issues arise when customer service agents send inappropriate emails. Trading agents and wallet assistants who sign transactions create asset management issues.

The difference is finality. Once a wallet signs and broadcasts a transfer, the path to recovery depends on the trading partner, public pressure, or law enforcement.

The bunker incident is the strongest case of control failure. Bankr’s access control documentation describes read-only mode, write operation flags, IP whitelisting, and recipient whitelisting.

These are types of gates that are external to the model and can mitigate damage even if the model parses malicious content in an unexpected way.

Similar dangers arise for trading agents and local assistants with permissions from wallets and exchanges. A trading bot with an API key can generate fraudulent orders if it accepts market comments, social posts, emails, or web pages as instructions.

A local assistant with access to the wallet creates a more dangerous version of the same tool invocation problem. Indirect instructions can lead the assistant to prepare transactions or disclose sensitive operational details.

Security research already models this type of failure. Indirectly prompted injection refers to malicious content that manipulates an agent through the data it processes, while tool-invoking agent research evaluates attacks and defenses on agents that operate using external tools.

NIST’s Adversarial Machine Learning Taxonomy provides a broader language for thinking about these attacks and mitigations.

What Cryptocurrency Users Need

For cryptocurrency investors, permission design is a core requirement. Agents connected to wallets must start with the assumption that web pages, X-posts, DMs, emails, and encoded text can contain hostile instructions.

This assumption turns agent safety into a transaction policy issue.

First, a trading agent must have separate read and write modes. Read mode allows you to summarize the market, compare tokens and suggest actions.

Write mode requires new user verification, limited order size, and pre-approved venue or recipient. Commands that appear in public text should not inherit wallet permissions simply because they match the natural language format.

Second, the recipient allow list must be enforced by code external to LLM. The model can suggest transfers.

The policy layer must decide whether to allow recipients, tokens, chains, amounts, and timing. If any fields are outside the scope of your policy, you should either stop the run or move it to human review.

Third, spending limits are session-based and must be reset proactively. Depending on your policy, daily or per-action limits can reduce or block DRB transfers.

The exact number depends on your balance and strategy, but the invariant is simpler. The agent parsed the command correctly and should not have unlimited spending privileges.

Fourth, local key separation must be treated as a hard boundary. Power users running custom assistants on machines with access to wallets or exchanges should separate those credentials from the assistant’s file and browser permissions.

According to 0xDeployer, early versions of Banker’s agent had a hard-coded block that ignored responses from Grok to prevent LLM-on-LLM prompt injection chains. This protection was not built into the latest agent rewrite, creating a gap that allowed published Grok responses to become executable Bankr instructions.

Deployer said Banker then added strong blocks to Grok’s accounts and directed agent wallet operators to controls already available to account holders, including IP whitelisting of API keys, allowed API keys, and a per-account toggle that disables Banker execution from X replies.

Assistants can draft trades. Another wallet face must approve it.

While traders may monitor extensive asset screens and the status of Bitcoin and Ethereum, the agent’s risk is more driven by permission boundaries than market direction.

CryptoSlate’s previously covered agent economic flows, generative AI agents, autonomous agent payments, and crypto products connected to MCPs demonstrate how agents can quickly move closer to financial activity.

Security lessons can be learned from certification paths. Treat model output as untrusted until another policy layer validates intent, permissions, recipients, assets, amounts, and user confirmation.

Prompt injection continues to modify encoded text and forms throughout multi-step agent interactions. The defender must be present where the transaction is authorized before the wallet is signed.